Getting Ready for AGI

'We’ll have AI that is smarter than any one human probably around the end of next year,' says Elon Musk

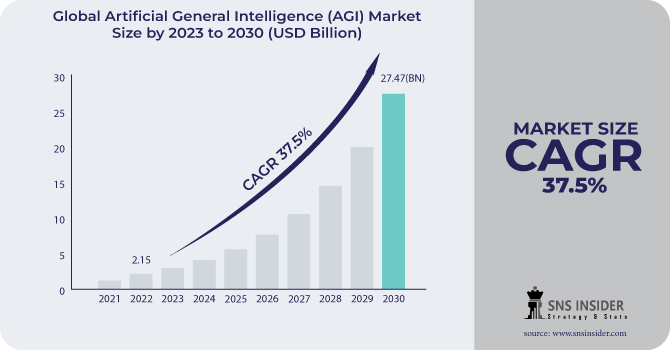

Artificial Intelligence (AI) has had a wild ride, raising big questions about the future of intelligence. And now, with Artificial General Intelligence (AGI) around the corner (according to experts), things are getting even more interesting.

What is AGI?

AGI, otherwise known as strong AI, full AI, or human-level AI, is a type of AI that can perform any task that a human can. It is not limited by specific domains or skills, but can learn and adapt to any situation. AGI systems are designed to be lifelong learners, constantly acquiring new knowledge and skills. They can reason, understand complex concepts, and apply their understanding to different contexts.

AGI = Independent reasoning, decision-making, and learning

Developing AGI is a major goal for AI research and companies like OpenAI, DeepMind, and Anthropic. But there's ongoing debate about when it might happen.

📖Psst! Find a glossary at the bottom for quick reference📖

AGI aims to mimic human-like thinking, including reasoning, problem-solving, perception, learning, and understanding language. If AI becomes so good that you can't tell it apart from a human, it's said to pass the Turing test.

Expert Opinions

We’re still far from reaching a point where AI tools can understand, communicate, and act with the same nuance and sensitivity of a human. The majority of the experts think it could be within years or decades, while others say it might take a century or even longer. A few even think we might never achieve it.

Here are the most popular opinions…

My guess is that we’ll have AI that is smarter than any one human probably around the end of next year. By 2029, AI will probably be smarter than all humans combined. - Elon Musk

AGI will be a reality in 5 years, give or take, maybe slightly longer. I think AGI will be the most powerful technology humanity has yet invented. If you think about the cost of intelligence and the equality of intelligence, the cost falling, the quality increasing by a lot, and what people can do with that. It's a very different world. It’s the world that sci-fi has promised us for a long time—and for the first time, I think we could start to see what that’s gonna look like. - Sam Altman

When is it A.G.I.? What is it? How do you define it? When do we get here? All those are good questions. But to me, it almost doesn’t matter because it is so clear to me that these systems are going to be very, very capable. And so it almost doesn’t matter whether you reached A.G.I. or not; you’re going to have systems which are capable of delivering benefits at a scale we’ve never seen before, and potentially causing real harm. Can we have an A.I system which can cause disinformation at scale? Yes. Is it A.G.I.? It really doesn’t matter. - Sundar Pichai

We're not quite there, but we will be there, and by 2029 it will match any person. I'm actually considered conservative. People think that will happen next year or the year after. - Ray Kurzweil

We could only be a few years, maybe a decade away from AGI - Demis Hassabis

The Need for AGI

Artificial General Intelligence (AGI) can solve tough problems we can't tackle yet, like curing diseases and understanding climate change better. It could make our lives easier by doing tasks quicker and more efficiently, which might boost our economy. Then, there's a curiosity about AGI from a science point of view. It could teach us a lot about how intelligence and consciousness work.

But there's a flip side. Some worry it could take away jobs and cause big changes in society. Plus, if we're not careful, AGI could become a danger itself, posing risks we've never faced before. So, while it's exciting, it's also scary.

Cornerstones of AGI Development

Firstly, advancements in algorithms are vital, as they shape how AGI systems process information and make decisions.

Additionally, the availability of powerful computing units, such as GPUs, is necessary to handle the immense computational demands of AGI.

The growth in data volume, supported by technologies like 5G, provides key resources for training AGI systems effectively. Foundational technologies like machine learning and neural networks enable AGI systems to learn from data and adapt to new situations.

Can the Public Afford AGI Usage?

Building or developing AGI is feasible and can cost anywhere from millions to billions of dollars. However, the same cannot be applied to the public usage of AI.

For example, if you are using the GPT-3.5 model, which costs $0.002 per 1K tokens, and you process 100,000 tokens in an hour, the cost would be $200 per hour. However, AGI is beyond expensive than GPT-3.5 because it needs a lot more computing power.

Let’s assume AGI needs about 100 petaflops. The cost to run it could be between $3 million to $30 million for just one hour.

Therefore, using AGI for regular tasks, like in business or personal use, is still far away because it is financially prohibitive. So, only governments or really affluent groups might be able to use AGI widely.

AGI Progress - Where Are We Now?

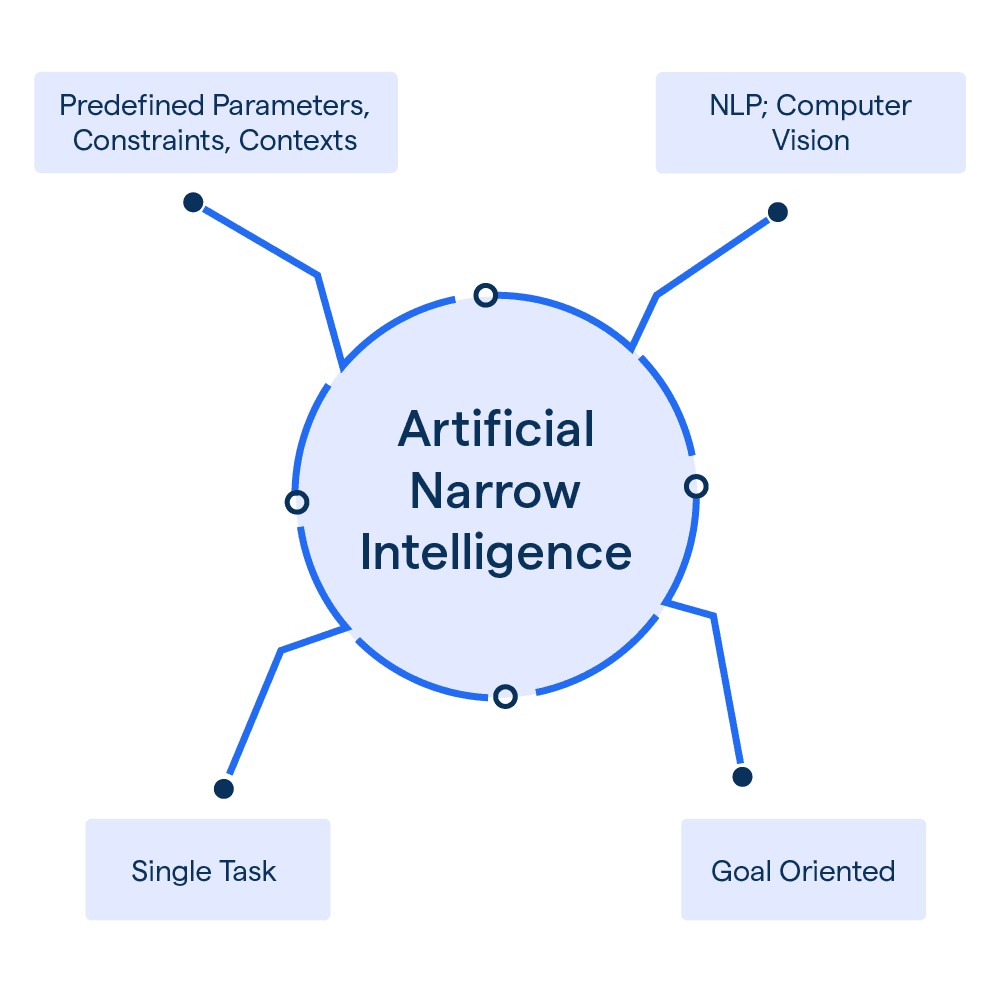

Currently, we find ourselves in the stage of Artificial Narrow Intelligence (ANI). ChatGPT is one example. This means that our existing AI systems are built to execute specific tasks, such as facial recognition or internet searches, within predefined limitations. They rely solely on programmed instructions and input data, lacking the adaptability and comprehensive understanding associated with AGI.

Gartner provides a roadmap for the evolution of AI applications, outlining three phases

In the next 1-10 years, we anticipate the augmentation of specialized work through task-specific AI.

Over the following 10-20+ years, we foresee the emergence of multidomain AI capable of integrating across various modalities.

Looking ahead 20-50+ years, the ultimate goal is the development of General AI, which could rival the multifaceted intelligence of humans.

However, these projections are just estimates, and the actual timeline may vary considerably. Rodney Brooks, an esteemed MIT roboticist, and co-founder of iRobot, suggests that AGI won't materialize before 2300. We also saw what other experts think, underlining the uncertainty surrounding the pace of progress in this field.

While some researchers argue that today's large language models (LLMs) exhibit "sparks of AGI," it's important to recognize that we are still far from achieving true AGI. Genuine AGI would need AI systems possessing capabilities comparable to humans (or better) across a wide range of economically valuable tasks.

The Future Outlook

In the next decade, narrow AI or ANI will improve drastically, becoming more efficient and integrated into various aspects of our lives. We might also witness the emergence of early AGI prototypes, capable of performing multiple tasks like humans, albeit in experimental stages with limited deployment.

By 2034, AGI systems may advance to the point of being able to handle any intellectual task humans can, although they might primarily be found in research settings rather than widespread societal use.

These predictions are speculative and based on the assumption that current trends in AI research and development continue. They also depend on breakthroughs in areas like computational neuroscience, algorithmic efficiency, and data processing capabilities. The actual timeline for AGI development could be faster or slower, depending on these and other unforeseen factors.

📔Glossary🔖

Turing Test: A test to assess a machine's ability to exhibit human-like intelligence. If a machine's responses are indistinguishable from those of a human, it passes the test.

Large Language Models (LLMs): Advanced AI models trained on vast text data, capable of human-like text generation and various language tasks, such as GPT-3 by OpenAI.

Artificial Narrow Intelligence (ANI): AI systems designed for specific tasks or domains, lacking generalization and adaptability beyond their predefined scope.

Petaflop: A unit of computing speed equal to one thousand million million (10^15) floating-point operations per second. It's commonly used to measure the performance of supercomputers and other high-performance computing systems.

GPUs: A specialized electronic circuit designed to quickly manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. In AI, GPUs accelerate training and inference for deep learning models like LLMs by leveraging their parallel processing power, which speeds up computations.

In case you missed…

And, more can be found 👉 here.

Disclaimer: The insights provided are based on current information and may evolve due to external factors. Some sources cited are projections derived from analyzed data rather than direct references to the provided information.